Egocentric stereo, depth, IMU, force-torque across real environments.

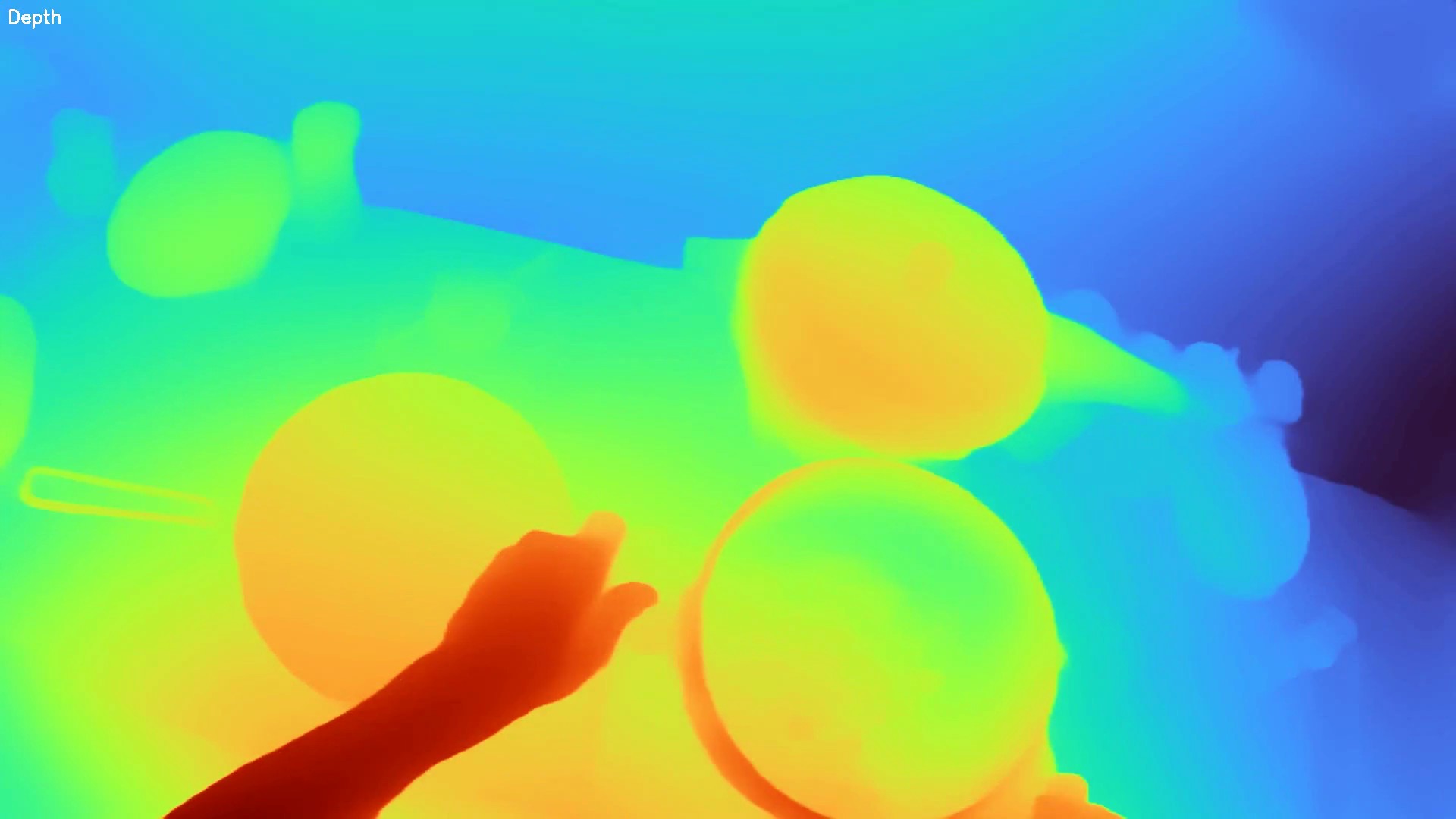

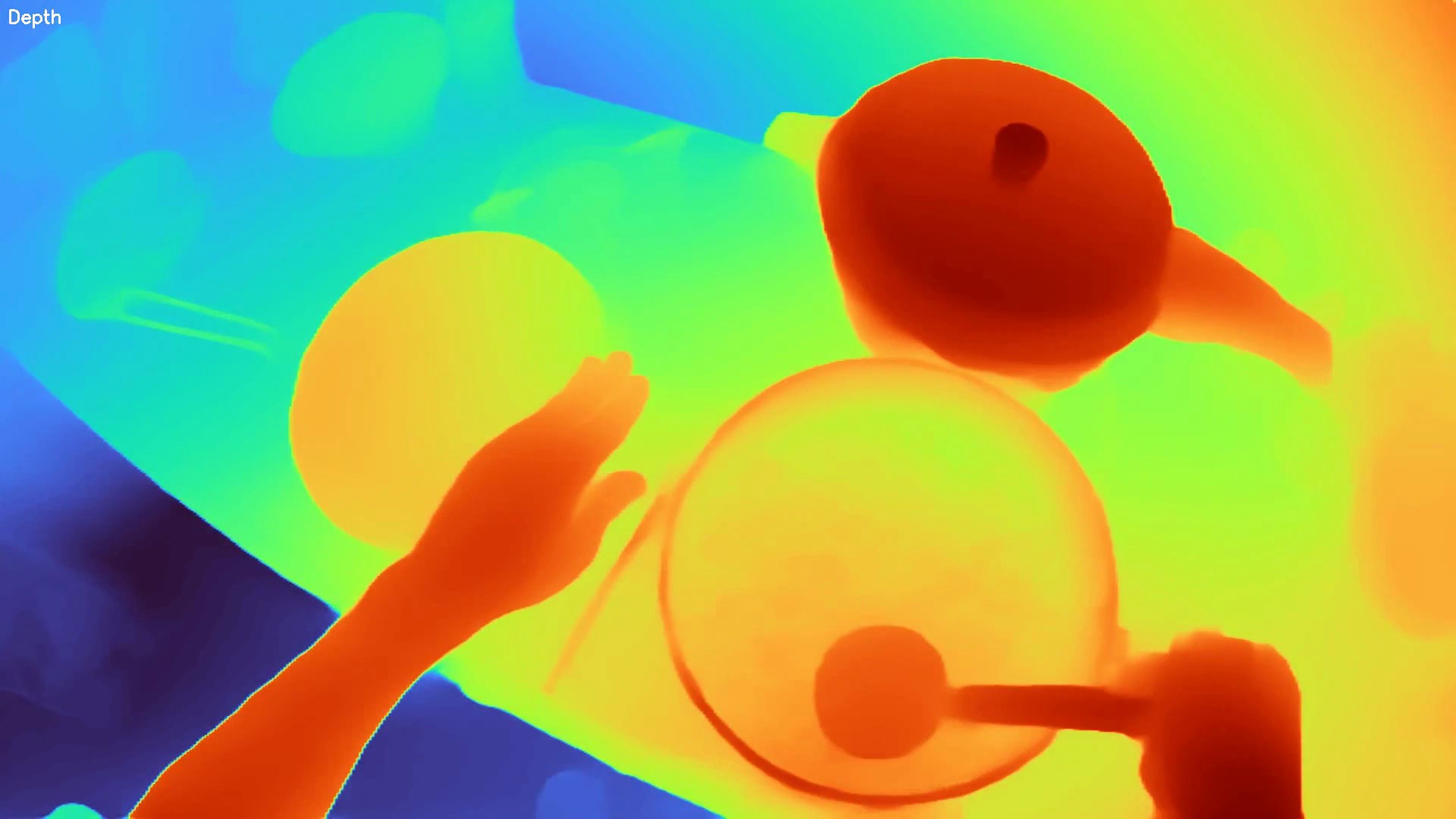

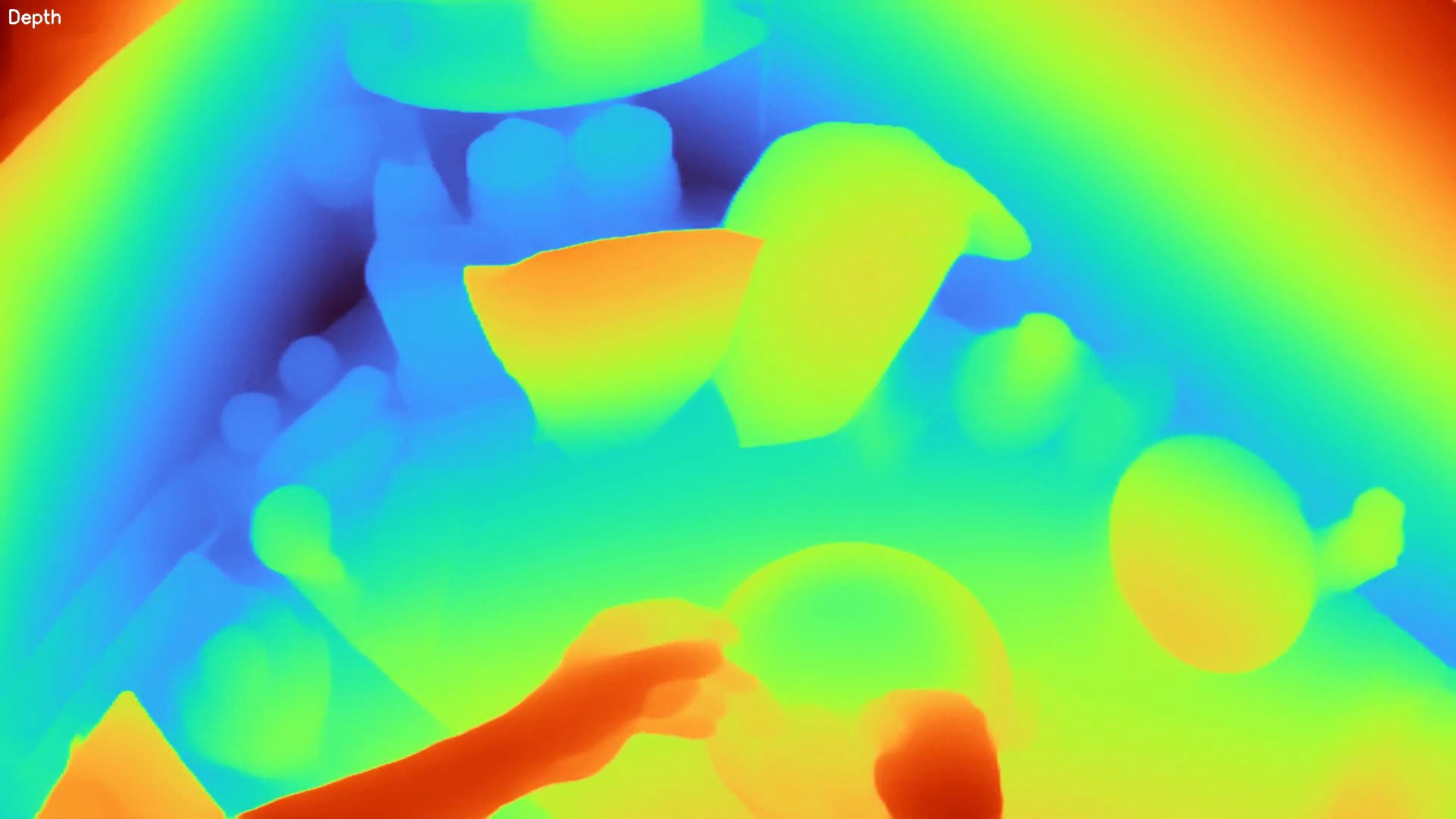

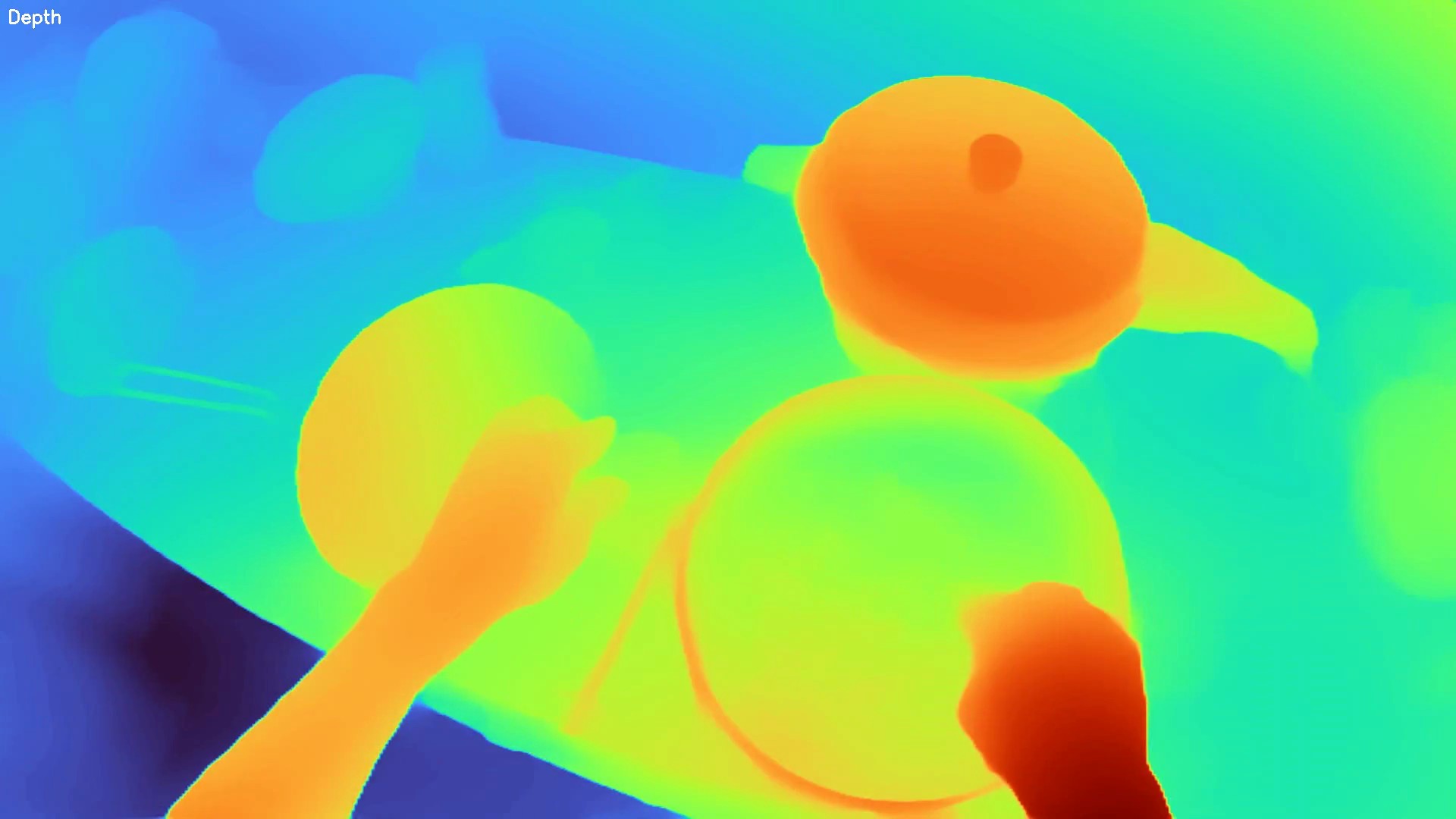

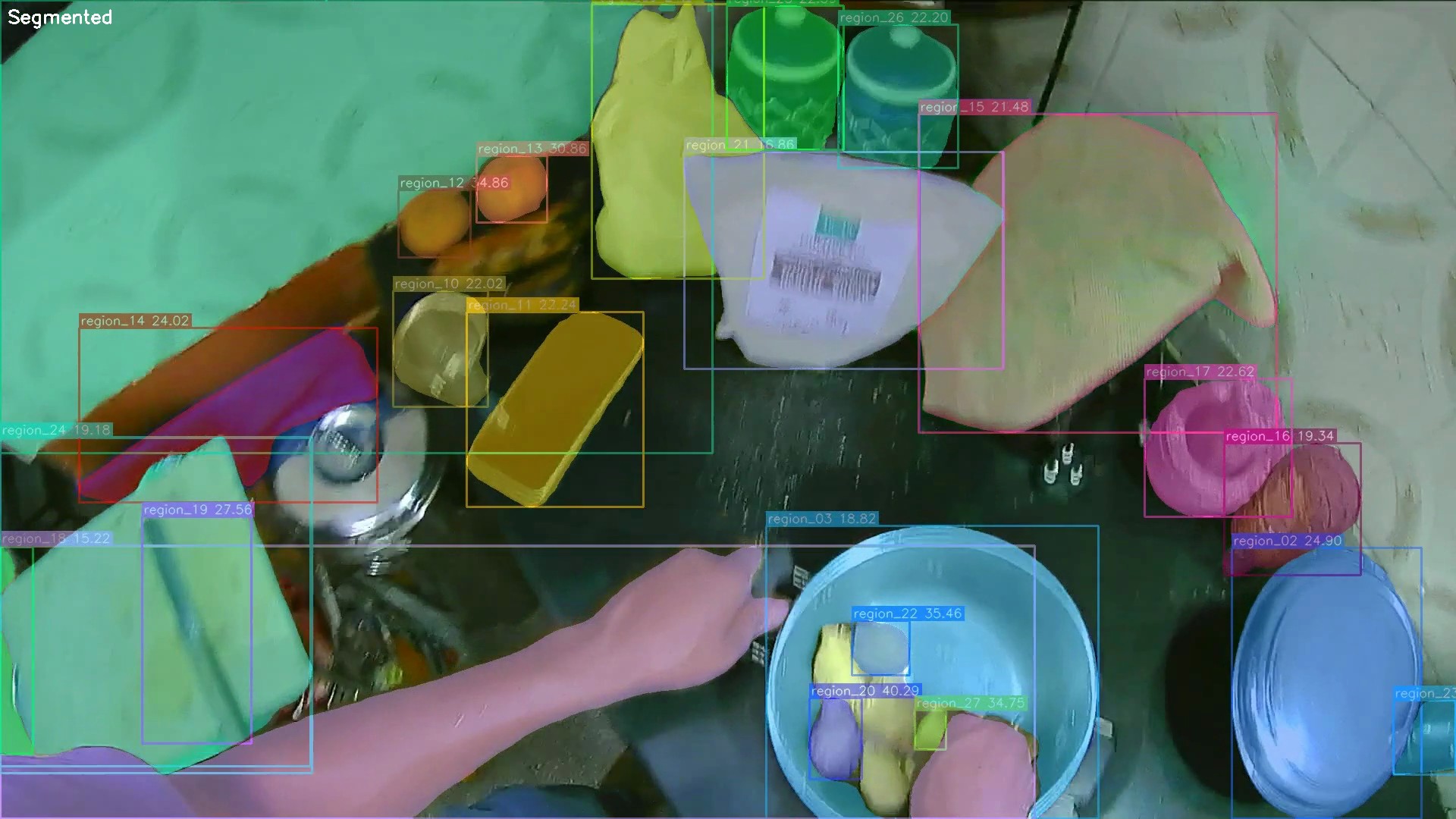

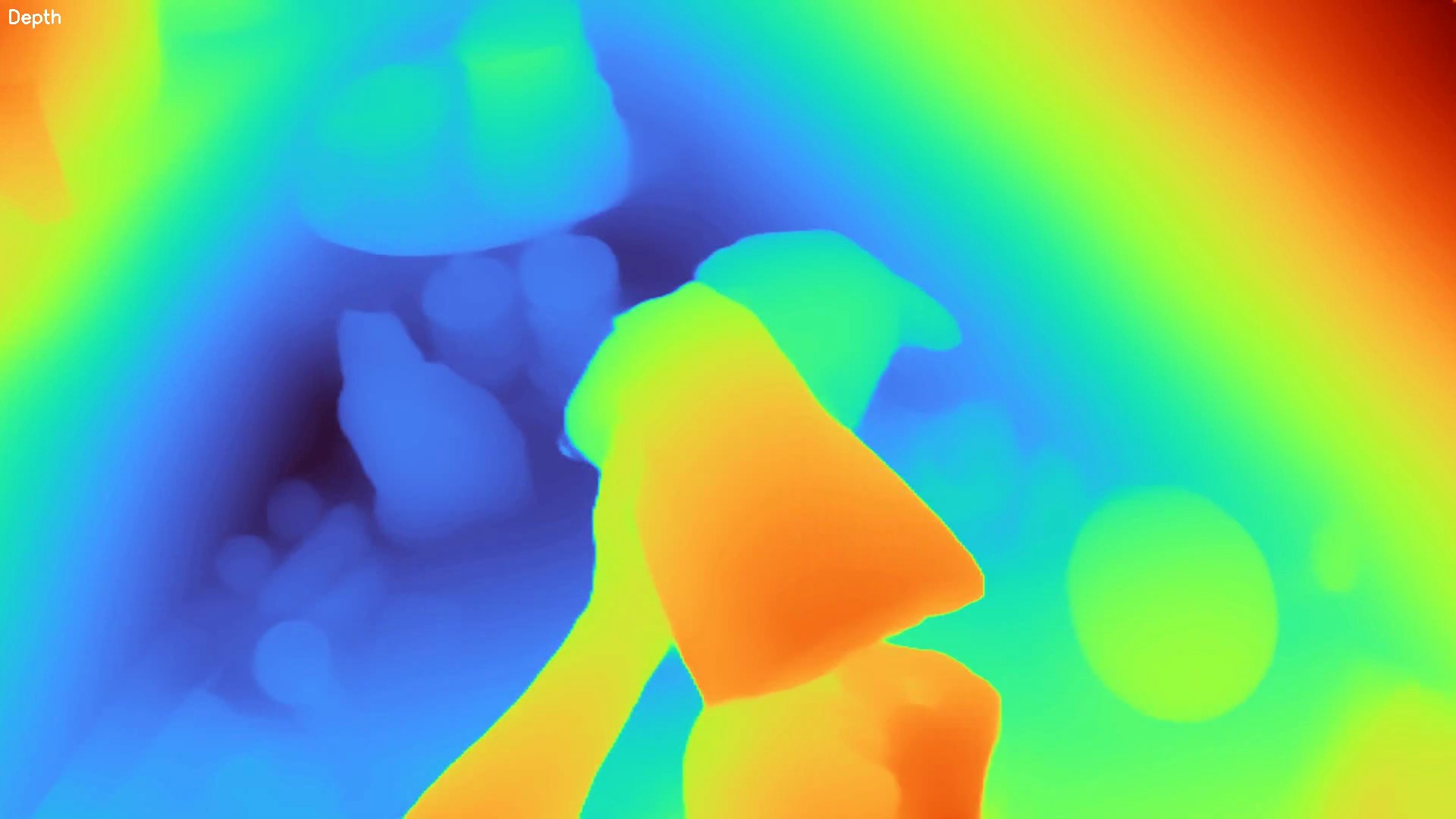

Instance segmentation, dense depth estimation, 6-DoF pose extraction.

Automated consistency validation. Outlier rejection. Quality scoring.

Multi-view reconstruction. Point clouds. Complete scene understanding.

500+ task types. Object classes. Environment metadata. One taxonomy.

LeRobot, Open X, HDF5. Streaming API. Version-controlled releases.

| MODEL / DATASET | SIZE | ROBOTS | TASKS |

|---|---|---|---|

| pi0 (Physical Intelligence) | 10K+ hrs | 7 | 68 |

| Open X-Embodiment (Google DeepMind) | 1M eps | 22 | 527 |

| OpenVLA (Berkeley/Stanford) | 970K eps | 22 | 527 |

| DROID (Stanford/Berkeley/CMU) | 76K traj | 1 | 84 |

| BridgeData V2 (Berkeley) | 60K traj | 1 | 13 |

| TRI LBMs (Toyota Research) | 1,700 hrs | 2 | 100s |

| Figure Helix (Figure AI) | ~500 hrs | 1 | bimanual |